I want to use the embedded_ssd_mobilenet_v1 model by opencv c++ version ,which is trained in tensorflow python,I ever tried the same thing but the model is ssd_mobilenet instead of the embedded version,although I met some problem but finally solved with the help of other people.But because it's too time consuming on my platform(tinkerboard),so I want to use the embedded version to get detection faster,I use the tools offered in this site to generate a .pbtxt file,then I use the pb and pbtxt file in the following code:

#include <opencv2/opencv.hpp>

#include <opencv2/dnn.hpp>

using namespace std;

using namespace cv;

const size_t inWidth = 300;

const size_t inHeight = 300;

const float WHRatio = inWidth / (float)inHeight;

const char* classNames[] = { "background","face" };

int main() {

String weights = "/data/bincheng.xiong/detect_head/pbfiles_embedded/pbfile1/frozen_inference_graph.pb";

String prototxt = "/home/xbc/embedded_ssd.pbtxt";

dnn::Net net = cv::dnn::readNetFromTensorflow(weights, prototxt);

//VideoCapture cap("rtsp://admin:[email protected]:554/Streaming/Channels/001");

VideoCapture cap(0);

Mat frame;

while(1){

cap >> frame;

Size frame_size = frame.size();

Size cropSize;

if (frame_size.width / (float)frame_size.height > WHRatio)

{

cropSize = Size(static_cast<int>(frame_size.height * WHRatio),

frame_size.height);

}

else

{

cropSize = Size(frame_size.width,

static_cast<int>(frame_size.width / WHRatio));

}

Rect crop(Point((frame_size.width - cropSize.width) / 2,

(frame_size.height - cropSize.height) / 2),

cropSize);

cv::Mat blob = cv::dnn::blobFromImage(frame,1./255,Size(300,300));

//cout << "blob size: " << blob.size << endl;

net.setInput(blob);

Mat output = net.forward();

//cout << "output size: " << output.size << endl;

Mat detectionMat(output.size[2], output.size[3], CV_32F, output.ptr<float>());

frame = frame(crop);

float confidenceThreshold = 0.001;

for (int i = 0; i < detectionMat.rows; i++)

{

float confidence = detectionMat.at<float>(i, 2);

if (confidence > confidenceThreshold)

{

size_t objectClass = (size_t)(detectionMat.at<float>(i, 1));

int xLeftBottom = static_cast<int>(detectionMat.at<float>(i, 3) * frame.cols);

int yLeftBottom = static_cast<int>(detectionMat.at<float>(i, 4) * frame.rows);

int xRightTop = static_cast<int>(detectionMat.at<float>(i, 5) * frame.cols);

int yRightTop = static_cast<int>(detectionMat.at<float>(i, 6) * frame.rows);

ostringstream ss;

ss << confidence;

String conf(ss.str());

Rect object((int)xLeftBottom, (int)yLeftBottom,

(int)(xRightTop - xLeftBottom),

(int)(yRightTop - yLeftBottom));

rectangle(frame, object, Scalar(0, 255, 0),2);

String label = String(classNames[objectClass]) + ": " + conf;

int baseLine = 0;

Size labelSize = getTextSize(label, FONT_HERSHEY_SIMPLEX, 0.5, 1, &baseLine);

rectangle(frame, Rect(Point(xLeftBottom, yLeftBottom - labelSize.height),

Size(labelSize.width, labelSize.height + baseLine)),

Scalar(0, 255, 0), CV_FILLED);

putText(frame, label, Point(xLeftBottom, yLeftBottom),

FONT_HERSHEY_SIMPLEX, 0.5, Scalar(0, 0, 0));

}

}

namedWindow("image", CV_WINDOW_NORMAL);

imshow("image", frame);

waitKey(10);

}

return 0;

}

I output the bbox produced by tf python code and opencv c++ code.I found that the python tf code output bbox as following:

boxes: [[[0.48616847 0.32456273 0.5719043 0.36506736]

[0.48817295 0.39137262 0.57161564 0.4317271 ]

[0.17184295 0.31928855 0.27021247 0.36755735]

[0.17288518 0.38449278 0.27023977 0.43242595]

[0.49070644 0.45021996 0.5716888 0.48942813]

[0.48250633 0.26584074 0.5715775 0.3070542 ]]] ,and it detect object correctly

and the opencv c++ code output bbox as following:

[0, 1, 0.39883164, -87.42878, -119.55261, 99.473885, 114.22792;

0, 1, 0.13128266, -67.32534, -174.75551, 72.507866, 175.54341;

0, 1, 0.072781183, -157.07892, -94.051201, 156.91415, 93.795418;

0, 1, 0.054214109, -207.14185, -68.914009, 213.63901, 69.790916;]

,and it detect object wrongly.

the fourth to seventh col is the bbox,I find that the bbox coordinate is out of range [0,1] and even get negative coordinate.What's the reason for this? Could you please have a look at this ?Thanks.

The python code is as followings:

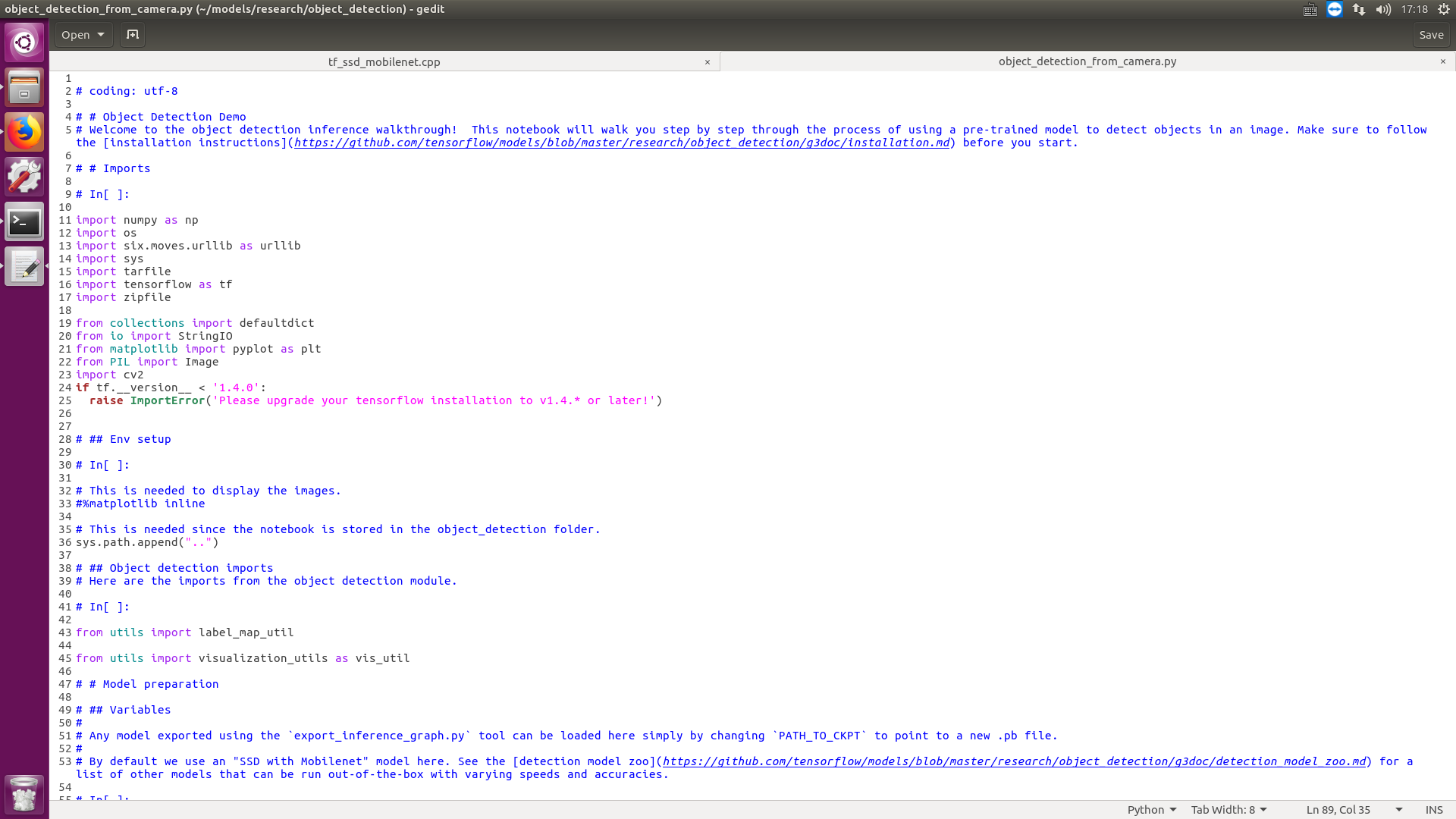

coding: utf-8

# Object Detection Demo

Welcome to the object detection inference walkthrough! This notebook will walk you step by step through the process of using a pre-trained model to detect objects in an image. Make sure to follow the installation instructions before you start.

# Imports

In[ ]:

import numpy as np

import os

import six.moves.urllib as urllib

import sys

import tarfile

import tensorflow as tf

import zipfile

from collections import defaultdict

from io import StringIO

from matplotlib import pyplot as plt

from PIL import Image

import cv2

if tf.__version__ < '1.4.0':

raise ImportError('Please upgrade your tensorflow installation to v1.4.* or later!')

## Env setup

In[ ]:

This is needed to display the images.

%matplotlib inline

This is needed since the notebook is stored in the object_detection folder.

sys.path.append("..")

## Object detection imports

Here are the imports from the object detection module.

In[ ]:

from utils import label_map_util

from utils import visualization_utils as vis_util

# Model preparation

## Variables

#

Any model exported using the export_inference_graph.py tool can be loaded here simply by changing PATH_TO_CKPT to point to a new .pb file.

#

By default we use an "SSD with Mobilenet" model here. See the detection model zoo for a list of other models that can be run out-of-the-box with varying speeds and accuracies.

In[ ]:

What model to download.

MODEL_NAME = 'ssd_mobilenet_v1_coco_2017_11_17'

MODEL_FILE = MODEL_NAME + '.tar.gz'

DOWNLOAD_BASE = 'http://download.tensorflow.org/models/object_detection/'

Path to frozen detection graph. This is the actual model that is used for the object detection.

PATH_TO_CKPT = '/data/bincheng.xiong/detect_head/pbfiles_embedded/pbfile1/frozen_inference_graph.pb'

PATH_TO_CKPT = '/data/bincheng.xiong/detect_head/pbfiles/pbfile1/frozen_inference_graph.pb'

PATH_TO_CKPT = '/data/bincheng.xiong/detect_head/pbfiles_embedded/pbfile1/frozen_inference_graph.pb'

List of the strings that is used to add correct label for each box.

PATH_TO_LABELS = '/home/xbc/models/research/object_detection/embedded_ssd_mobilenet_v1-xbc/head_label_map.pbtxt'

NUM_CLASSES = 1

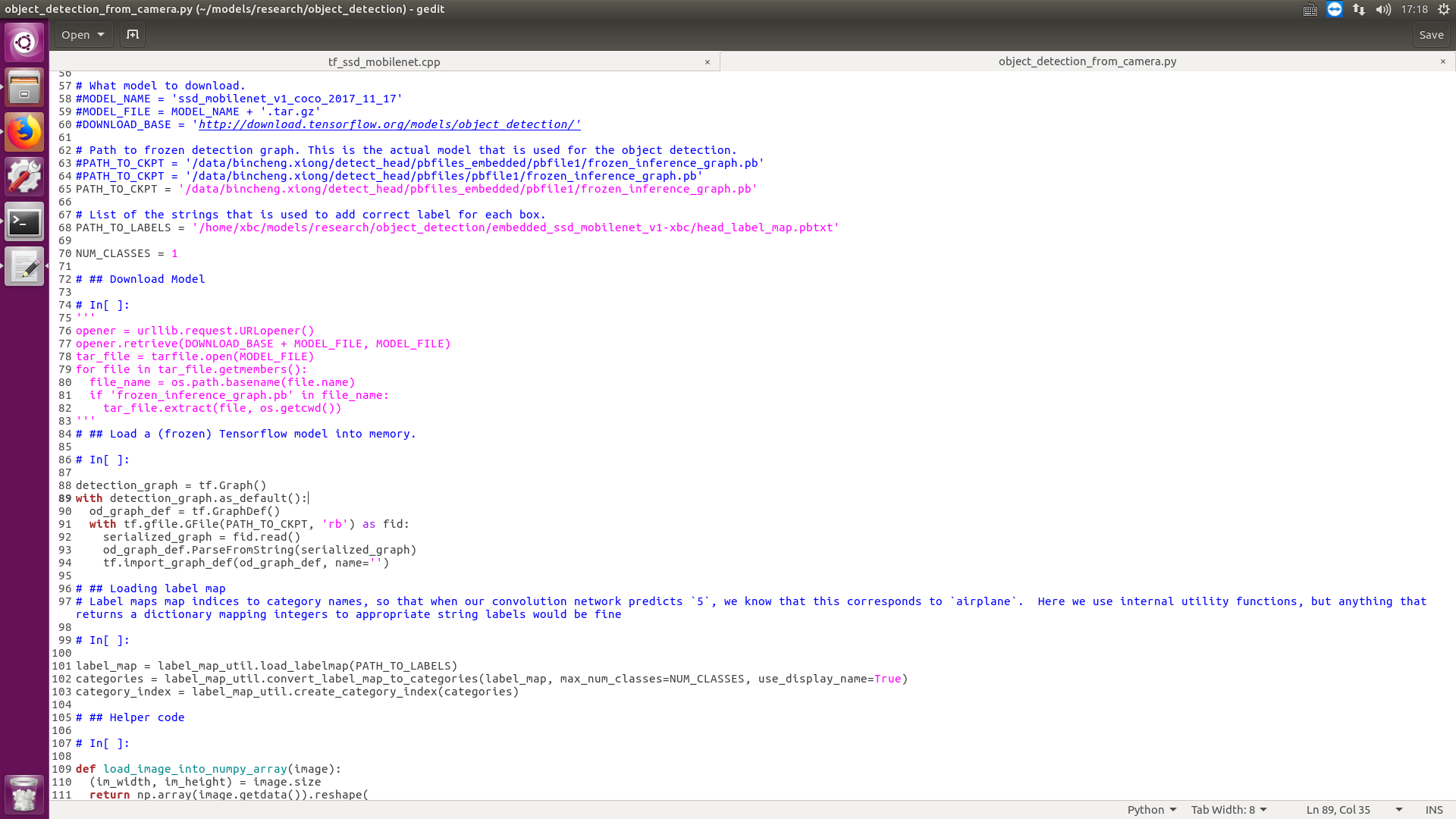

## Download Model

In[ ]:

'''

opener = urllib.request.URLopener()

opener.retrieve(DOWNLOAD_BASE + MODEL_FILE, MODEL_FILE)

tar_file = tarfile.open(MODEL_FILE)

for file in tar_file.getmembers():

file_name = os.path.basename(file.name)

if 'frozen_inference_graph.pb' in file_name:

tar_file.extract(file, os.getcwd())

'''

## Load a (frozen) Tensorflow model into memory.

In[ ]:

detection_graph = tf.Graph()

with detection_graph.as_default():

od_graph_def = tf.GraphDef()

with tf.gfile.GFile(PATH_TO_CKPT, 'rb') as fid:

serialized_graph = fid.read()

od_graph_def.ParseFromString(serialized_graph)

tf.import_graph_def(od_graph_def, name='')

## Loading label map

Label maps map indices to category names, so that when our convolution network predicts 5, we know that this corresponds to airplane. Here we use internal utility functions, but anything that returns a dictionary mapping integers to appropriate string labels would be fine

In[ ]:

label_map = label_map_util.load_labelmap(PATH_TO_LABELS)

categories = label_map_util.convert_label_map_to_categories(label_map, max_num_classes=NUM_CLASSES, use_display_name=True)

category_index = label_map_util.create_category_index(categories)

## Helper code

In[ ]:

def load_image_into_numpy_array(image):

(im_width, im_height) = image.size

return np.array(image.getdata()).reshape(

(im_height, im_width, 3)).astype(np.uint8)

# Detection

In[ ]:

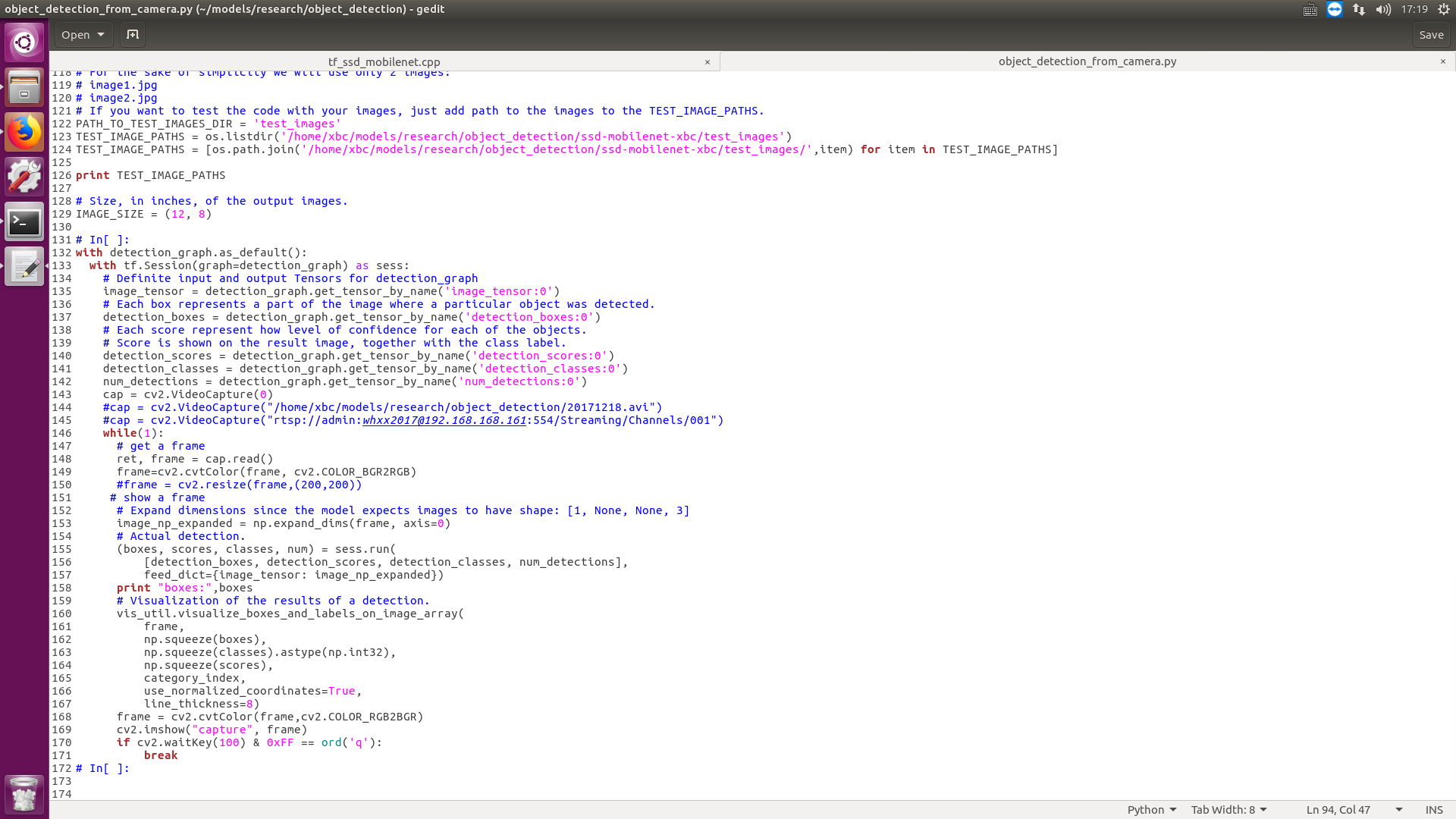

For the sake of simplicity we will use only 2 images:

image1.jpg

image2.jpg

If you want to test the code with your images, just add path to the images to the TEST_IMAGE_PATHS.

PATH_TO_TEST_IMAGES_DIR = 'test_images'

TEST_IMAGE_PATHS = os.listdir('/home/xbc/models/research/object_detection/ssd-mobilenet-xbc/test_images')

TEST_IMAGE_PATHS = [os.path.join('/home/xbc/models/research/object_detection/ssd-mobilenet-xbc/test_images/',item) for item in TEST_IMAGE_PATHS]

print TEST_IMAGE_PATHS

Size, in inches, of the output images.

IMAGE_SIZE = (12, 8)

In[ ]:

with detection_graph.as_default():

with tf.Session(graph=detection_graph) as sess:

# Definite input and output Tensors for detection_graph

image_tensor = detection_graph.get_tensor_by_name('image_tensor:0')

# Each box represents a part of the image where a particular object was detected.

detection_boxes = detection_graph.get_tensor_by_name('detection_boxes:0')

# Each score represent how level of confidence for each of the objects.

# Score is shown on the result image, together with the class label.

detection_scores = detection_graph.get_tensor_by_name('detection_scores:0')

detection_classes = detection_graph.get_tensor_by_name('detection_classes:0')

num_detections = detection_graph.get_tensor_by_name('num_detections:0')

cap = cv2.VideoCapture(0)

#cap = cv2.VideoCapture("/home/xbc/models/research/object_detection/20171218.avi")

#cap = cv2.VideoCapture("rtsp://admin:[email protected]:554/Streaming/Channels/001")

while(1):

# get a frame

ret, frame = cap.read()

frame=cv2.cvtColor(frame, cv2.COLOR_BGR2RGB)

#frame = cv2.resize(frame,(200,200))

# show a frame

# Expand dimensions since the model expects images to have shape: [1, None, None, 3]

image_np_expanded = np.expand_dims(frame, axis=0)

# Actual detection.

(boxes, scores, classes, num) = sess.run(

[detection_boxes, detection_scores, detection_classes, num_detections],

feed_dict={image_tensor: image_np_expanded})

print "boxes:",boxes

# Visualization of the results of a detection.

vis_util.visualize_boxes_and_labels_on_image_array(

frame,

np.squeeze(boxes),

np.squeeze(classes).astype(np.int32),

np.squeeze(scores),

category_index,

use_normalized_coordinates=True,

line_thickness=8)

frame = cv2.cvtColor(frame,cv2.COLOR_RGB2BGR)

cv2.imshow("capture", frame)

if cv2.waitKey(100) & 0xFF == ord('q'):

break

In[ ]:

I put the code in page1~3,it's hard for me to put the text code in right format...

I put the code in page1~3,it's hard for me to put the text code in right format...