This forum is disabled, please visit https://forum.opencv.org

| 1 | initial version |

Here i will explain method to decompose the decompose the transformation matrix H , as in the following two articles

Math

,code

here it's my trial code

//read the input image

Mat img_object = imread( strObjectFile, CV_LOAD_IMAGE_GRAYSCALE );

Mat img_scene = imread( strSceneFile, CV_LOAD_IMAGE_GRAYSCALE );

Mat img_scene_color = imread( strSceneFile, CV_LOAD_IMAGE_COLOR );

if( img_scene.empty() || img_object.empty())

{

return ERROR_READ_FILE;

}

//Step 1 Find the object in the scene and find H matrix

//-- 1: Detect the keypoints using SURF Detector

int minHessian = 400;

SurfFeatureDetector detector( minHessian );

std::vector<KeyPoint> keypoints_object, keypoints_scene;

detector.detect( img_object, keypoints_object );

detector.detect( img_scene, keypoints_scene );

//-- 2: Calculate descriptors (feature vectors)

SurfDescriptorExtractor extractor;

Mat descriptors_object, descriptors_scene;

extractor.compute( img_object, keypoints_object, descriptors_object );

extractor.compute( img_scene, keypoints_scene, descriptors_scene );

//-- 3: Matching descriptor vectors using FLANN matcher

FlannBasedMatcher matcher;

std::vector< DMatch > matches;

matcher.match( descriptors_object, descriptors_scene, matches );

double max_dist = 0; double min_dist = 100;

//-- Quick calculation of max and min distances between keypoints

for( int i = 0; i < descriptors_object.rows; i++ )

{

double dist = matches[i].distance;

if( dist < min_dist )

min_dist = dist;

if( dist > max_dist )

max_dist = dist;

}

//-- Draw only "good" matches (i.e. whose distance is less than 3*min_dist )

std::vector< DMatch > good_matches;

for( int i = 0; i < descriptors_object.rows; i++ )

{

if( matches[i].distance < 3*min_dist )

{

good_matches.push_back( matches[i]);

}

}

Mat img_matches;

drawMatches( img_object, keypoints_object, img_scene, keypoints_scene,

good_matches, img_matches, Scalar::all(-1), Scalar::all(-1),

vector<char>(), DrawMatchesFlags::NOT_DRAW_SINGLE_POINTS );

//Draw matched points

imwrite("c:\\temp\\Matched_Pints.png",img_matches);

//-- Localize the object

std::vector<Point2f> obj;

std::vector<Point2f> scene;

for( int i = 0; i < good_matches.size(); i++ )

{

//-- Get the keypoints from the good matches

obj.push_back( keypoints_object[ good_matches[i].queryIdx ].pt );

scene.push_back( keypoints_scene[ good_matches[i].trainIdx ].pt );

}

Mat H = findHomography( obj, scene, CV_RANSAC );

//-- Get the corners from the image_1 ( the object to be "detected" )

std::vector<Point2f> obj_corners(4);

obj_corners[0] = cvPoint(0,0); obj_corners[1] = cvPoint( img_object.cols, 0 );

obj_corners[2] = cvPoint( img_object.cols, img_object.rows ); obj_corners[3] = cvPoint( 0, img_object.rows );

std::vector<Point2f> scene_corners(4);

perspectiveTransform( obj_corners, scene_corners, H);

//-- Draw lines between the corners (the mapped object in the scene - image_2 )

line( img_matches, scene_corners[0] + Point2f( img_object.cols, 0), scene_corners[1] + Point2f( img_object.cols, 0), Scalar(0, 255, 0), 4 );

line( img_matches, scene_corners[1] + Point2f( img_object.cols, 0), scene_corners[2] + Point2f( img_object.cols, 0), Scalar( 0, 255, 0), 4 );

line( img_matches, scene_corners[2] + Point2f( img_object.cols, 0), scene_corners[3] + Point2f( img_object.cols, 0), Scalar( 0, 255, 0), 4 );

line( img_matches, scene_corners[3] + Point2f( img_object.cols, 0), scene_corners[0] + Point2f( img_object.cols, 0), Scalar( 0, 255, 0), 4 );

//-- Show detected matches

//imshow( "Good Matches & Object detection", img_matches );

imwrite("c:\\temp\\Object_detection_result.png",img_matches);

//Step 2 correct the scene scale and rotation and locate object in the recovered scene

Mat img_Recovered;

//1-decompose find the H matrix

float a = H.at<double>(0,0);

float b = H.at<double>(0,1);

float c = H.at<double>(0,2);

float d = H.at<double>(1,0);

float e = H.at<double>(1,1);

float f = H.at<double>(1,2);

float p = sqrt(a*a + b*b);

float r = (a*e - b*d)/(p);

float q = (a*d+b*e)/(a*e - b*d);

Point2f translation(c,f);

Point2f scale(p,r);

float shear = q;

float theta = atan2(b,a);

double thetaRadian = theta * 180 / CV_PI ;

//rotate and scale the image scene

Size ImgSize = Size(img_scene.cols, img_scene.rows);//initial size

Point2f pt(img_scene.cols/2., img_scene.rows/2.);//initial center

Mat rotMat = getRotationMatrix2D(pt,-thetaRadian,scale.x);

//Calculate the new image size to avoid truncation of some parts from the scene

cv::Rect bbox = cv::RotatedRect(pt,img_scene.size(), -thetaRadian).boundingRect();

// adjust transformation matrix and destination matrix to hold the new size

rotMat.at<double>(0,2) += bbox.width/2.0 - pt.x;

rotMat.at<double>(1,2) += bbox.height/2.0 - pt.y;

ImgSize = bbox.size();

warpAffine(img_scene_color, img_Recovered, rotMat, ImgSize,INTER_LANCZOS4,BORDER_CONSTANT,Scalar(255));

//now get the coordinates of logo (object) detected in the step1 but in the recoverd image (waraped)

std::vector<Point2f> obj_corners_wraped(4);

transform(scene_corners, obj_corners_wraped, rotMat);

//-- Draw lines between the corners (the mapped object in the scene - image_2 )

line( img_Recovered, obj_corners_wraped[0] , obj_corners_wraped[1] , Scalar(0, 255, 0), 4 );

line( img_Recovered, obj_corners_wraped[1] , obj_corners_wraped[2] , Scalar( 0, 255, 0), 4 );

line( img_Recovered, obj_corners_wraped[2] , obj_corners_wraped[3] , Scalar( 0, 255, 0), 4 );

line( img_Recovered, obj_corners_wraped[3] , obj_corners_wraped[0] , Scalar( 0, 255, 0), 4 );

imwrite("c:\\temp\\Object_detection_Recoverd.png",img_Recovered);

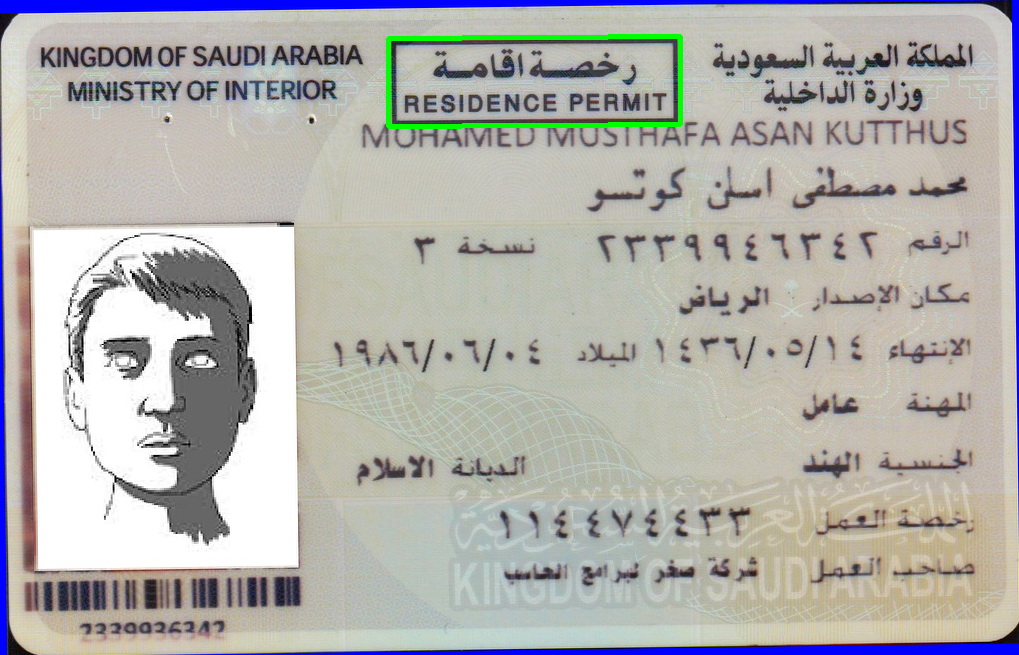

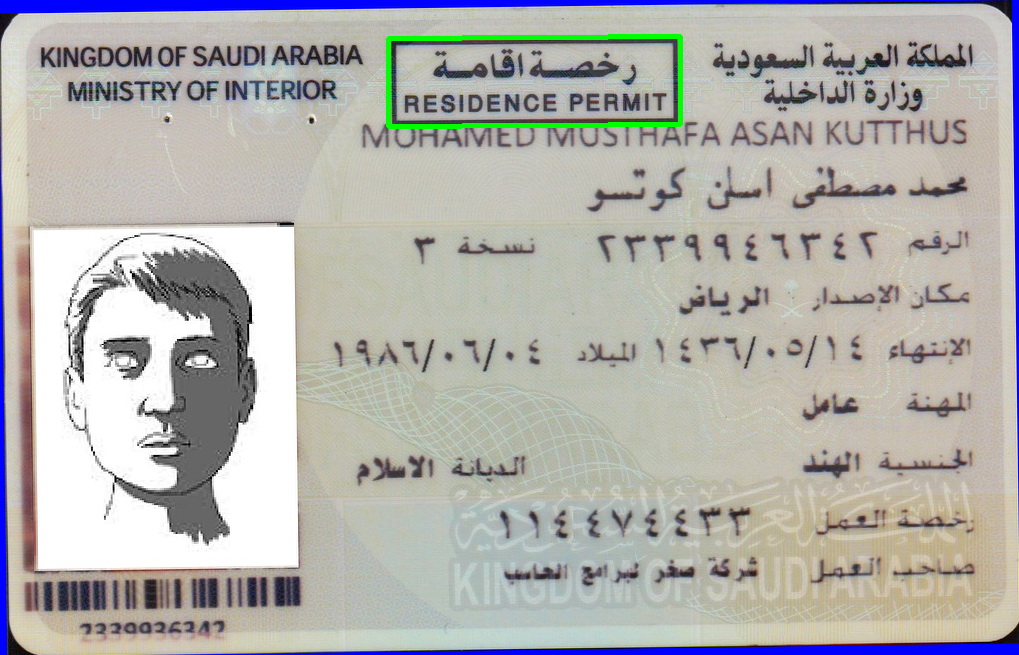

and here it's the required image and rectangle of the object is detected

| 2 | No.2 Revision |

Here i will explain method to decompose the decompose the transformation matrix H , as in the following two articles

Math

,code

here it's my trial code

//read the input image

Mat img_object = imread( strObjectFile, CV_LOAD_IMAGE_GRAYSCALE );

Mat img_scene = imread( strSceneFile, CV_LOAD_IMAGE_GRAYSCALE );

Mat img_scene_color = imread( strSceneFile, CV_LOAD_IMAGE_COLOR );

if( img_scene.empty() || img_object.empty())

{

return ERROR_READ_FILE;

}

//Step 1 Find the object in the scene and find H matrix

//-- 1: Detect the keypoints using SURF Detector

int minHessian = 400;

SurfFeatureDetector detector( minHessian );

std::vector<KeyPoint> keypoints_object, keypoints_scene;

detector.detect( img_object, keypoints_object );

detector.detect( img_scene, keypoints_scene );

//-- 2: Calculate descriptors (feature vectors)

SurfDescriptorExtractor extractor;

Mat descriptors_object, descriptors_scene;

extractor.compute( img_object, keypoints_object, descriptors_object );

extractor.compute( img_scene, keypoints_scene, descriptors_scene );

//-- 3: Matching descriptor vectors using FLANN matcher

FlannBasedMatcher matcher;

std::vector< DMatch > matches;

matcher.match( descriptors_object, descriptors_scene, matches );

double max_dist = 0; double min_dist = 100;

//-- Quick calculation of max and min distances between keypoints

for( int i = 0; i < descriptors_object.rows; i++ )

{

double dist = matches[i].distance;

if( dist < min_dist )

min_dist = dist;

if( dist > max_dist )

max_dist = dist;

}

//-- Draw only "good" matches (i.e. whose distance is less than 3*min_dist )

std::vector< DMatch > good_matches;

for( int i = 0; i < descriptors_object.rows; i++ )

{

if( matches[i].distance < 3*min_dist )

{

good_matches.push_back( matches[i]);

}

}

Mat img_matches;

drawMatches( img_object, keypoints_object, img_scene, keypoints_scene,

good_matches, img_matches, Scalar::all(-1), Scalar::all(-1),

vector<char>(), DrawMatchesFlags::NOT_DRAW_SINGLE_POINTS );

//Draw matched points

imwrite("c:\\temp\\Matched_Pints.png",img_matches);

//-- Localize the object

std::vector<Point2f> obj;

std::vector<Point2f> scene;

for( int i = 0; i < good_matches.size(); i++ )

{

//-- Get the keypoints from the good matches

obj.push_back( keypoints_object[ good_matches[i].queryIdx ].pt );

scene.push_back( keypoints_scene[ good_matches[i].trainIdx ].pt );

}

Mat H = findHomography( obj, scene, CV_RANSAC );

//-- Get the corners from the image_1 ( the object to be "detected" )

std::vector<Point2f> obj_corners(4);

obj_corners[0] = cvPoint(0,0); obj_corners[1] = cvPoint( img_object.cols, 0 );

obj_corners[2] = cvPoint( img_object.cols, img_object.rows ); obj_corners[3] = cvPoint( 0, img_object.rows );

std::vector<Point2f> scene_corners(4);

perspectiveTransform( obj_corners, scene_corners, H);

//-- Draw lines between the corners (the mapped object in the scene - image_2 )

line( img_matches, scene_corners[0] + Point2f( img_object.cols, 0), scene_corners[1] + Point2f( img_object.cols, 0), Scalar(0, 255, 0), 4 );

line( img_matches, scene_corners[1] + Point2f( img_object.cols, 0), scene_corners[2] + Point2f( img_object.cols, 0), Scalar( 0, 255, 0), 4 );

line( img_matches, scene_corners[2] + Point2f( img_object.cols, 0), scene_corners[3] + Point2f( img_object.cols, 0), Scalar( 0, 255, 0), 4 );

line( img_matches, scene_corners[3] + Point2f( img_object.cols, 0), scene_corners[0] + Point2f( img_object.cols, 0), Scalar( 0, 255, 0), 4 );

//-- Show detected matches

//imshow( "Good Matches & Object detection", img_matches );

imwrite("c:\\temp\\Object_detection_result.png",img_matches);

//Step 2 correct the scene scale and rotation and locate object in the recovered scene

Mat img_Recovered;

//1-decompose find the H matrix

float a = H.at<double>(0,0);

float b = H.at<double>(0,1);

float c = H.at<double>(0,2);

float d = H.at<double>(1,0);

float e = H.at<double>(1,1);

float f = H.at<double>(1,2);

float p = sqrt(a*a + b*b);

float r = (a*e - b*d)/(p);

float q = (a*d+b*e)/(a*e - b*d);

Point2f translation(c,f);

Point2f scale(p,r);

float shear = q;

float theta = atan2(b,a);

double thetaRadian = theta * 180 / CV_PI ;

//rotate and scale the image scene

Size ImgSize = Size(img_scene.cols, img_scene.rows);//initial size

Point2f pt(img_scene.cols/2., img_scene.rows/2.);//initial center

Mat rotMat = getRotationMatrix2D(pt,-thetaRadian,scale.x);

//Calculate the new image size to avoid truncation of some parts from the scene

cv::Rect bbox = cv::RotatedRect(pt,img_scene.size(), -thetaRadian).boundingRect();

// adjust transformation matrix and destination matrix to hold the new size

rotMat.at<double>(0,2) += bbox.width/2.0 - pt.x;

rotMat.at<double>(1,2) += bbox.height/2.0 - pt.y;

ImgSize = bbox.size();

warpAffine(img_scene_color, img_Recovered, rotMat, ImgSize,INTER_LANCZOS4,BORDER_CONSTANT,Scalar(255));

//now get the coordinates of logo (object) detected in the step1 but in the recoverd image (waraped)

std::vector<Point2f> obj_corners_wraped(4);

transform(scene_corners, obj_corners_wraped, rotMat);

//-- Draw lines between the corners (the mapped object in the scene - image_2 )

line( img_Recovered, obj_corners_wraped[0] , obj_corners_wraped[1] , Scalar(0, 255, 0), 4 );

line( img_Recovered, obj_corners_wraped[1] , obj_corners_wraped[2] , Scalar( 0, 255, 0), 4 );

line( img_Recovered, obj_corners_wraped[2] , obj_corners_wraped[3] , Scalar( 0, 255, 0), 4 );

line( img_Recovered, obj_corners_wraped[3] , obj_corners_wraped[0] , Scalar( 0, 255, 0), 4 );

imwrite("c:\\temp\\Object_detection_Recoverd.png",img_Recovered);

and here it's the required image and rectangle of the object is detected